What is a Context Window?

If you've been working with AI coding assistants, you've likely heard the term "context window". But what exactly is it?

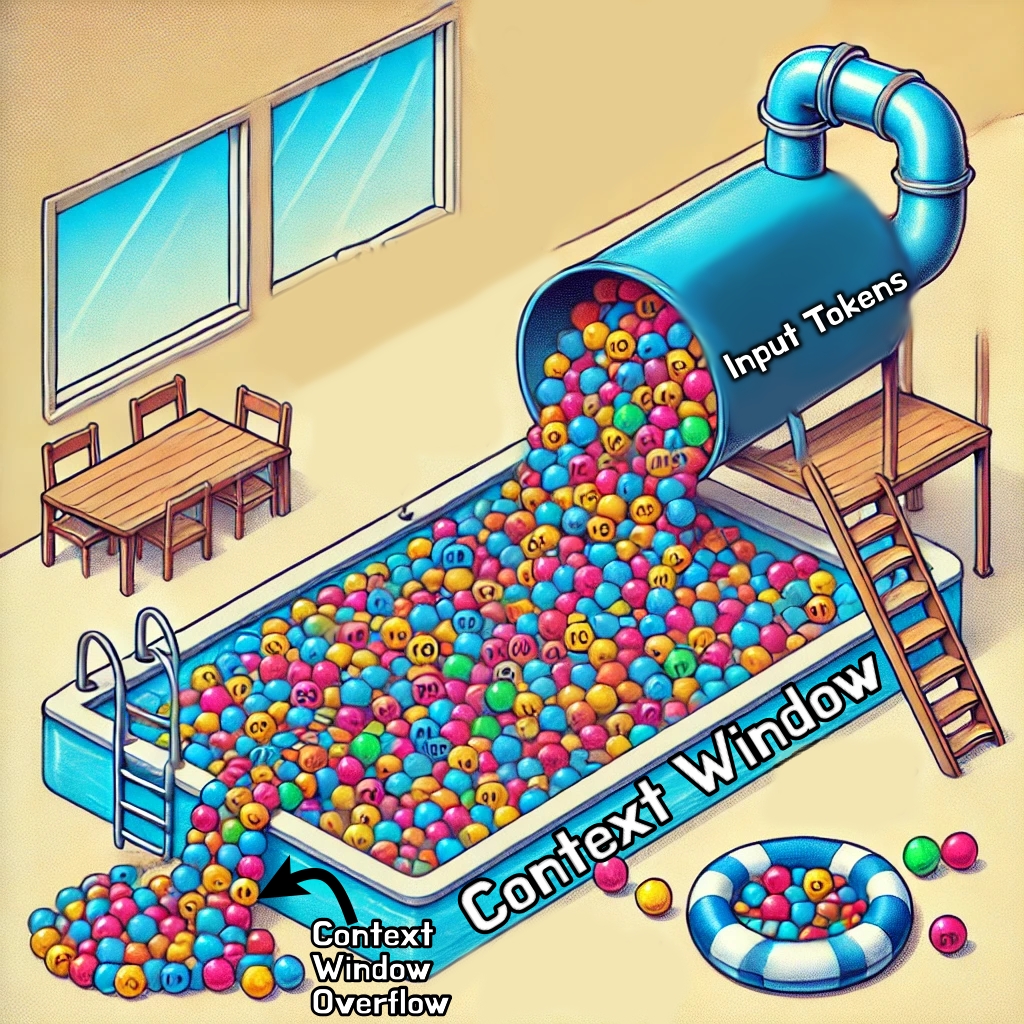

A context window is essentially the AI's working memory. It's the total amount of text (measured in tokens) that the model can process at once, including everything in your conversation: your prompts, the AI's responses, any code or files you've shared, and system instructions. Think of it like a whiteboard with limited space. Everything the AI needs to understand your request has to fit on that whiteboard.

When you start a fresh conversation, the whiteboard is mostly empty. As you chat, share files, and build context, that whiteboard fills up. Once it's full, older content gets pushed off to make room for new information. This is why a long coding session can sometimes feel like the AI has "forgotten" things you discussed earlier.

Why Context Management Matters

For simple one-off questions, context windows aren't much of a concern. But for agentic coding, where an AI autonomously explores codebases, runs commands, and iterates on solutions, context management becomes critical.

Here's why:

Performance degrades with bloat. As your context fills with irrelevant information, the AI has to sift through more noise to find the signal. This can lead to less accurate responses and the model losing track of important details.

Cost scales with context. Most AI APIs charge based on token usage. A bloated context means you're paying for the AI to process information it doesn't need.

Long contexts can cause drift. When the AI has too much competing information, it may start mixing up details or referencing outdated parts of the conversation.

Speed decreases. Processing more tokens takes more time. A lean context means faster responses.

Strategies for Keeping Context Lean

Here are some practical approaches I've found helpful when working with AI coding assistants:

Start fresh when changing tasks. If you're switching from debugging a backend issue to working on frontend styling, consider starting a new conversation. The context from your database debugging session won't help with CSS and will only add noise.

Be specific with file requests. Instead of dumping entire files into the conversation, point the AI to specific functions or sections. "Look at the handleSubmit function in form.js" is better than "here's my entire form component."

Summarize before continuing. If you've been working on something complex and need to continue later, ask the AI to summarize the current state. You can use that summary to bootstrap a fresh conversation.

Clean up dead ends. If you explored an approach that didn't work out, the details of that failed attempt are still taking up space. Consider noting what didn't work briefly and moving on in a new context.

Use task-specific conversations. Rather than one mega-session for your entire project, break work into focused conversations: one for the API layer, one for tests, one for documentation.

Let the AI manage its own context. Modern agentic tools often have built-in context management. Trust them to summarize and compress when needed rather than manually micromanaging everything.

Industry Recommendations

Current best practices suggest treating context like any other limited resource. The general guidance from teams building AI coding tools:

- Keep conversations focused on single tasks or closely related tasks

- Provide relevant context upfront rather than drip-feeding information

- Use the AI's ability to read files directly rather than pasting large blocks of code

- Reset context when you notice response quality degrading

- Take advantage of features like conversation summaries and context compression when available

Most modern AI coding assistants now handle context intelligently, automatically summarizing older parts of conversations to preserve the most relevant information. But understanding what's happening under the hood helps you work with these systems more effectively.

A Rapidly Evolving Landscape

Here's the thing: everything I've written above might be outdated within months. The AI space is moving at an unprecedented pace. Context windows that were 4,000 tokens a couple years ago are now measured in hundreds of thousands or even millions of tokens. New techniques for context compression and retrieval are emerging constantly.

What matters today is building good habits around context awareness, understanding the fundamentals of how these systems work, and staying adaptable. The specific numbers and limitations will change, but the principle of working efficiently with your AI's attention will remain relevant.

Keep experimenting, keep learning, and don't get too attached to any single workflow. The tools are evolving fast, and the developers who thrive will be the ones who evolve with them.